In this blog post we’ll talk about time, how it works, why it’s important to computers, and how NTP can be used to manage the time on computer systems.

“You may delay, but time will not.”

― Benjamin Franklin

Intro to NTP

NTP (Network Time Protocol) allows computers on a network to synchronise their system clocks, with accuracy of as little as a few milliseconds. It does this by exchanging packets over the network and performing calculations based on the contents of these packets. Here is a simplified breakdown of this process, where two systems A (client) and B (server), synchronise their time:

- System A inserts it’s current time into a packet, and sends this to system B

- Upon receiving the packet from system A, system B then inserts it’s current time into the packet and sends it back to system A

- When system system A receives this packet, it will use the contents of the packet to estimate the time difference between the systems

- System A will use this time difference to adjust the system time, so that it is in sync with system B

This is often repeated multiple times, with every iteration resulting in the times of the systems getting closer and closer together. The above steps assume that system B’s time is correct.

Why is NTP needed?

You may be wondering why computers go through so much “trouble” to synchronise their clocks, or why there is an entire protocol dedicated to it; people’s watches are often out by a couple of minutes, and they manage just fine, why are computers any different? Well that’s just it, computers are very different. The smallest unit of time most people use every day is seconds. They microwave their lunch for 45 seconds, they wait 40 seconds for their tea to brew, they call someone back in 30 seconds. That’s fine, and it doesn’t really matter if you cook your lunch for a few seconds longer, as not much happens in a second.

However, a second for a computer is a huge amount of time. A moderately powerful modern computer can perform 14,000,000,000 (that’s 14 billion) operations every second. So a one second disparity between the times on systems could result in an extra 14 billion operations taking place. To be completely fair, each of these many operations represents very little in the grand scheme of things, but the example still holds: computers are extremely time-sensitive.

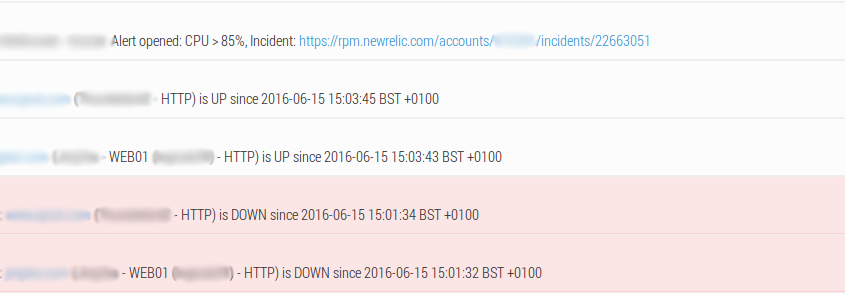

What happens when system times are out of sync?

When the times on systems are out of sync, some really odd and horrible things can happen:

- Logs on different systems won’t correspond to each other. Lets say your application breaks, and you want to look through the logs and see what the problem was. You know the issue happened at, for example, 03:17:54. When you check the logs, you look for the entries at 3AM and try to figure out what went wrong. What if one of the systems’ time is wrong? Even though a log entry says something happened at 3AM, you could actually be looking at what happened at 4AM. This can me manageable if you’re only comparing logs between 2 systems, but when you start dealing with more and more systems, this becomes impossible pretty quickly.

- Tracing emails can become very difficult. When you send an email, chances are it passes through multiple servers before reaching its destination. If you want to debug an email issue, you’re probably going to be checking headers (additional bits of data transmitted with the message), which contain timestamps added by each server the email passes through. If the times are out of sync on the systems, it can make it really difficult to trace the path of a message.

- CRON jobs could run at the wrong time. Let’s say you have a CRON job that runs at 9PM. Its purpose is to, for example, kick all users off of the system and backup their files from that day. But, what if the system time is wrong, and this CRON ends up running at 2PM instead? People would be kicked off of the system in the middle of the working day, potentially resulting in a big loss of data and/or productivity.

- Authentication services can be affected. Lots of two-factor authentication systems work on the idea of a one-time password. This is usually calculated based on a shared secret key, and the current time stamp. In order to authenticate successfully, a user needs to input the one-time password that the server is expecting. What happens if the client and server’s system times are different? Authentication fails, because the server is expecting a different key than the one provided by the user.

How to set up NTP on a Linux system & why servers should use UTC regardless of time zone

Setting up a Linux system to synchronise its system clock is really easy, and we’d always recommend doing this, especially on servers.

First, make sure you have an NTP client installed on your system. Most systems will come with one installed by default. A lot of the servers we manage run Ubuntu, so I’ll show you how to check an NTP client is installed on an Ubuntu system. Run this command in a terminal:

dpkg --list | grep ntp

You should see the package ntp listed. If nothing is returned, then you don’t have an NTP client installed. You can install it by running the following command with root privileges

sudo apt-get install ntp

The most important thing to configure with NTP is which servers to synchronise time with. These are defined in the /etc/ntp.conf file. If you check this file, you’ll find lines looking like the following:

server 0.ubuntu.pool.ntp.org

server 1.ubuntu.pool.ntp.org

server 2.ubuntu.pool.ntp.org

server 3.ubuntu.pool.ntp.org

These lines specify which NTP servers should be used. We typically change these to use the “standard” UK time-servers, like so:

server 0.uk.pool.ntp.org

server 1.uk.pool.ntp.org

server 2.uk.pool.ntp.org

server 3.uk.pool.ntp.org

These servers are all members of the NTP pool project. The NTP pool is a collection of publicly accessible NTP servers, which anyone can join (though there are a few requirements). We at Dogsbody Technology Ltd actually have a number of our own servers in the pool, providing time synchronisation capabilities for everybody to use. This helps to keep everybody’s system times in sync.

If you’ve having issues with NTP, or anything else for that matter, on your servers, then please get in touch and we’ll be happy to help.

Feature image made by Sean MacEntee licensed CC BY 2.0