Duplicacy is an open source backup tool which supports a large number of storage back-ends, including many cloud offerings, whilst also providing many other useful features. We recently implemented a duplicacy-based backup solution for a customer, and wanted to share our experience to help out anybody looking to implement duplicacy themselves.

Installation

Duplicacy is written in Go, meaning it can be easily downloaded and compiled on the CLI. However, this involves installing Go on the system you wish to backup, which may not always be an option. Fortunately, duplicacy also provides binary releases, which can be downloaded and executed with ease.

To install duplicacy on a Linux system, the steps are as follows:

wget https://github.com/gilbertchen/duplicacy/releases/download/v2.1.0/duplicacy_linux_x64_2.1.0

sudo mv duplicacy_linux_x64_2.1.0 /usr/local/bin/duplicacy

sudo chmod +x /usr/local/bin/duplicacy

You can then run duplicacy by simple running the “duplicacy” command in your terminal.

Setting up your storage

As mentioned above, duplicacy supports an impressive number of storage back-ends. As of the time of writing, they are:

- Local disk

- SFTP

- Dropbox

- Amazon S3

- Wasabi

- DigitalOcean Spaces

- Google Cloud Storage

- Microsoft Azure

- Backblaze B2

- Google Drive

- Microsoft OneDrive

- Hubic

- OpenStack Swift

- WebDAV (under beta testing)

- pcloud (via WebDAV)

- Box.com (via WebDAV)

The two options that we’ve used are SFTP and AWS (Amazon Web Services) S3. To backup a system over SFTP, all you need is a working SFTP user on the remote system. No additional set up is required.

The set up for Amazon S3 is a little more involved, in summary, the steps are:

- Create an Amazon S3 bucket in your preferred region

- Create an IAM policy granting permissions on this bucket

- Create an IAM user and assign them this policy

- Configure duplicacy to use this user and bucket

Creating an S3 bucket

Creating a bucket is pretty straightforward. Login to your Amazon S3 account, go to the S3 service, click “Create bucket”, give your bucket a name, select a region, done. There are some other options when creating a bucket but these are not relevant to this post so I’ll not cover them here.

Creating an IAM policy

IAM stands for Identity and Access Management, and is central to many operations in AWS. To create your policy, navigate to the IAM service in AWS, select “policies” on the left, and click the big blue “Create policy” button at the top.

On this screen, choose the “JSON” tab. This is where we’ll specify the guts of our policy. It should look something like this:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": "s3:ListAllMyBuckets",

"Resource": "arn:aws:s3:::*"

},

{

"Effect": "Allow",

"Action": "s3:*",

"Resource": [

"arn:aws:s3:::dbt-gary-duplicacy-backup-example",

"arn:aws:s3:::dbt-gary-duplicacy-backup-example/*"

]

}

]

}

You’ll need to replace “dbt-gary-duplicacy-backup-example” with the name of the S3 bucket you created in the last step

When you’re happy with your policy, click “Review policy”, followed by “Save changes”

Creating an IAM user and assigning the policy

From the home of the IAM service, now click “Users” on the left, followed by the big blue “Add user” button at the top. Provide a name for your user, and check the “Programmatic access” box below. Click next.

On the next screen, click “Attach existing policies directly”. At the top of the list of policies now listed below, click the “Filter: Policy type” drop-down, and select “Customer managed”. Check the box for your IAM policy, and click “review” to continue, followed by “Create user” on the next page.

Your IAM user and policy have now been created.

Ensure that you save the details now presented to you. You will need these to configure duplicacy

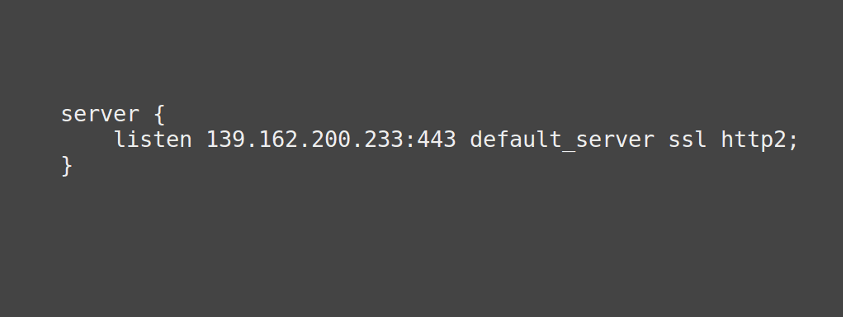

Configuring duplicacy

On the system you wish to backup, navigate to the directory you wish to backup. For example, on the system we configured, this was the “/home” directory. You can now configure duplicacy. The steps are as follows:

sudo duplicacy init your_repo_name s3://your-region@amazon.com/your_bucket_name

sudo duplicacy set -key s3_id -value your_access_key

sudo duplicacy set -key s3_secret -value your_secret_key

There are a number of strings you’ll need to replace in the above snippet:

your_repo_name – The name you’d like to give to this set of backups. For example, “johns-desktop”

your_bucket_name – The name you gave your S3 bucket in the steps above.

your_region – This is the AWS region you select for your buck above. Please see this table, using the “region” column that corresponds to your region name. For example, “eu-west-2” for the London region

your_access_key – This is the access key for the IAM user you created above. It will be a long string of random looking characters.

your_secret_key – This is the secret key for the IAM user you created above. It will again be a long string of random looking characters. Make sure you keep this safe, as anybody who has it can access your backups!

Running a backup

If all went well with the above, then you’re ready to run your first backup. This is as easy as running:

sudo duplicacy backup

This will backup all files under the current directory. Depending on the number of and size of files, this may take some time.

Including/excluding certain files/directories from your backups

Duplicacy offers powerful filtering functionality allowing for fine grained control over what files and directories you want to backup. These can be somewhat confusing to configure, but are very useful once you’ve got the hang of them. We may do a follow up post covering these, so be sure to check back in the future.

Restoring backups from duplicacy

In order to restore from duplicacy, you need to configure your system to interact with your backups. If you’re restoring on the same system the backups were taken on, you need not take any additional steps. If you’re restoring to a different system, you need to follow the installation and duplicacy configuration steps show above.

Once things are configured, you can view the available backups like so:

sudo duplicacy list

Note that you must be in the correct directory on your system (the one where you initialised your repo), in order to view the backups

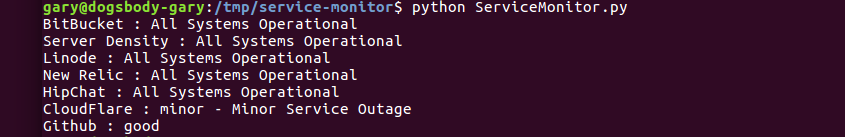

This will give you a list of your backups:

Snapshot johns-desktop revision 1 created at 2018-04-12 07:29 -hash

Snapshot johns-desktop revision 2 created at 2018-04-12 12:03

Snapshot johns-desktop revision 3 created at 2018-04-17 17:37

Snapshot johns-desktop revision 4 created at 2018-04-18 11:10

Snapshot johns-desktop revision 5 created at 2018-04-18 14:38

Snapshot johns-desktop revision 6 created at 2018-04-20 03:02

Snapshot johns-desktop revision 7 created at 2018-04-21 03:02

Snapshot johns-desktop revision 8 created at 2018-04-22 03:02

Snapshot johns-desktop revision 9 created at 2018-04-23 03:02

As you can see, there are revision numbers and the corresponding times and dates for these revisions. Revisions are just another name for a backup.

You can then restore a particular backup. For example, to restore revision 7:

sudo duplicacy restore -r 7

Again, depending on the number and size of files in this backup, this may take some time.

Duplicacy offer some really cool features when using the restore command. For example, you can see the contents of a file in a backup with the “cat” option, and compare differences between two backups with the “diff” option. You can see all of the options here.

Selective restores

One of the more useful restore options is to only restore a certain file or directory from your backup. This can be accomplished with the following command:

sudo duplicacy restore -r 7 path/to/your/file.txt

This can also be extended to restore everything under a directory, like so:

sudo duplicacy restore -r 7 path/to/your/directory\*

Summary

Duplicacy is an extremely powerful and portable backup tool, allowing for reliable and fine grained backups of your data. If you have any questions on duplicacy or would like any help setting it up, please leave a comment below or contact us and we’ll be happy to help. Thanks for reading.

Feature image background by 111692634@N04/ licensed CC BY-SA 2.0.