Removing support for TLS 1.0 and TLS 1.1

TL;DR

For security reasons, it is best practice to disable TLS 1.0 and TLS 1.1, but before you do this you may need to weigh up the risks to traffic from old browsers.

After disabling TLS 1.0 and TLS 1.1 any visitors using old browsers won’t be able to access your site. If you are accepting credit card payments through your website then your customers security is more important but if you have a public information site then this may not be the case.

Don’t I always want the best security?

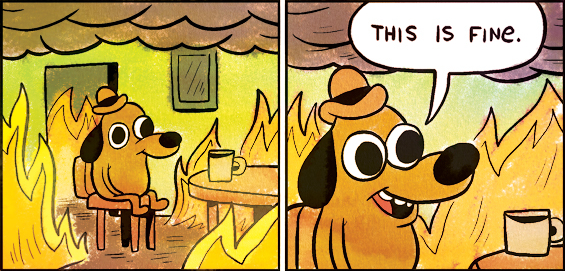

Please don’t get us wrong. We are NOT advocating blindly reducing security. This post is very much a response to customers that come to us wanting changes that will break their sites in order to get a perfect score or tick a compliance box. We can usually come up with a best of both worlds once we show the exact implications of the change.

What’s the fuss about?

GlobalSign’s What’s Behind the Change? paragraph sums this up nicely:

Various vulnerabilities over the past few years (e.g., BEAST, POODLE, DROWN…we love a good acronym, don’t we?) have had industry experts recommending disabling all versions of SSL and TLS 1.0 for a while now. PCI Compliance was another driving factor. On June 30, 2018, the PCI Data Security Standard (DSS) required that all websites needed to be on TLS 1.1 or higher in order to comply.

The RFC 7525 from 2015 stipulates that implementations should not use TLS 1.0 or TLS 1.1:

o Implementations SHOULD NOT negotiate TLS version 1.0 [RFC2246]; the only exception is when no higher version is available in the negotiation. Rationale: TLS 1.0 (published in 1999) does not support many modern, strong cipher suites. In addition, TLS 1.0 lacks a per- record Initialization Vector (IV) for CBC-based cipher suites and does not warn against common padding errors. o Implementations SHOULD NOT negotiate TLS version 1.1 [RFC4346]; the only exception is when no higher version is available in the negotiation. Rationale: TLS 1.1 (published in 2006) is a security improvement over TLS 1.0 but still does not support certain stronger cipher suites.

Qualys SSL Labs have reduced their grading for servers which support TLS 1.0 or TLS 1.1

Assessing the risk

Who won’t be able to access my website if I disable TLS 1.0 or TLS 1.1? Generally speaking browsers before 2013 will have trouble. Most popular clients affected are old Android phones and old versions of Windows with Internet Explorer 10. For the exact Android versions and other affected clients this is a nice breakdown. As you’d expect the number of visitors with these old clients will vary according to your user base. It’s best you check your site’s analytics to inform your decision.

Again, you can take into account how important encryption is for your website. For example, at the time of writing it’s interesting to note that paypal.com has removed support for TLS 1.0 & 1.1 whilst google.com has not.

Summary

So what does this mean? Lets give some examples…

If security is important to you; perhaps you have an e-commerce site taking payments or you are a IT consultancy like ourselves where people wish to share private information. You must disable old SSL/TLS protocols so that the only way people can communicate with your site is as secure as possible.

If accessibility is important to you; perhaps you are trying to share public information, be it a marketing or public resources site. It maybe worth supporting old protocols to allow your message to be shared as wide as possible.

Remember; it maybe typically called a sales “funnel” but traffic doesn’t have to end up in just one place. Users not supporting the right levels of security can be redirected to alternative pages where they can be contacted in other ways. Why lose a sale when you don’t have to!

We’ve intentionally painted with broad strokes in this blog post. We’re happy to give specific advice if you contact us and feel free to leave a comment 🙂

Before we get into the differences of how to patch it’s worth discussing what to patch.

Before we get into the differences of how to patch it’s worth discussing what to patch.